PUBLISHED:

21 March 2026

DOI:

10.54854/imi2025.04

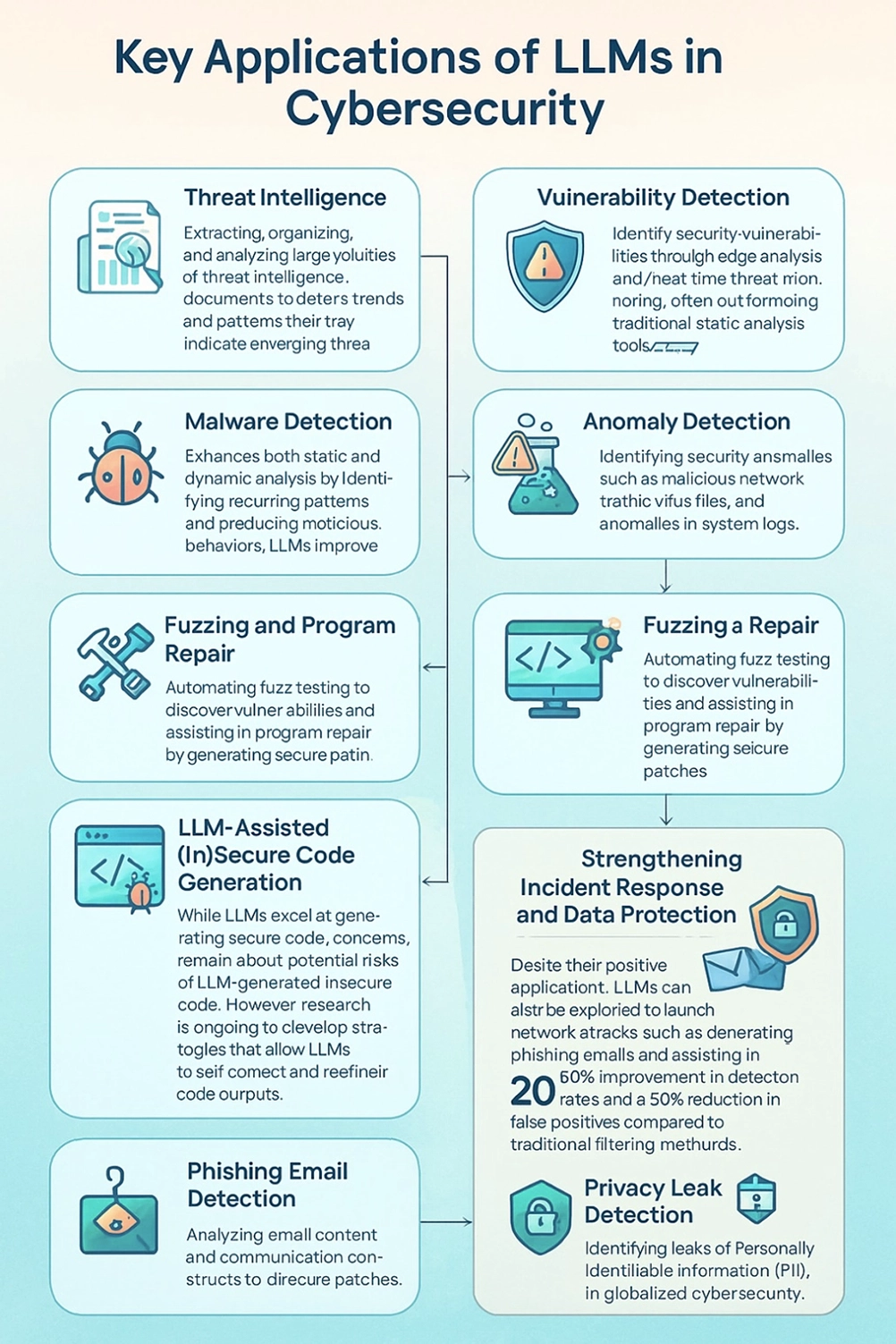

Large LanguageModels (LLMs) have dramatically changed the digital world in recent years. These systems can generate human-like text, summarize information, write code, answer complex questions, and analyze enormous amounts of data in seconds. However, the rapid adoption of LLMs has also raised serious questions, especially in the field of cybersecurity. On one hand, thesemodels can strengthen digital security by detecting threats, analyzing suspicious activity, automating security responses, and helping experts understand complex cyberattacksmore quickly. On the other hand, the same capabilities can be misused by malicious actors. LLMs can be used to generate convincing phishing emails, write malware code, spread misinformation at scale, or assist in social engineering attacks. This creates what is often described as a cybersecurity paradox: the very technologies designed to protect digital systems can also be turned into powerful tools for harm. Beyond security risks, LLMs raise important ethical and legal concerns. One major issue is privacy. Many of thesemodels are trained on massive datasets collected fromthe internet, often without the clear consent of the individuals who created that data. This raises questions about data ownership, personal privacy, and whether sensitive or copyrighted information is being used responsibly. There are also concerns about accountability. When an LLM produces harmful, biased, or incorrect information, it is often unclear who should be held responsible—the developers, the users, or the organizations deploying the system. This article examines how LLMs sit at the intersection of rapid technological innovation and complex ethical challenges. It explores their growing influence on global digital security while highlighting the risks they pose to privacy, trust, and human rights. Finally, it discusses the significant governance challenges surrounding LLMs and emphasizes the urgent need for proactive, coordinated international regulation. Without clear rules, transparency, and global cooperation, the benefits of LLMs may be overshadowed by their risks, making responsible oversight not just important but essential.

CITE THIS ARTICLE

A. Saito, "The Cybersecurity Paradox: How Large Language Models Protect and Threaten Digital Security and Human Rights", Innovations in Machine Intelligence (IMI), vol.5, pp. 28-3, 2025. DOI: 10.54854/ml2025.04

DOWNLOAD PDF